AI, machine learning and deep learning explained

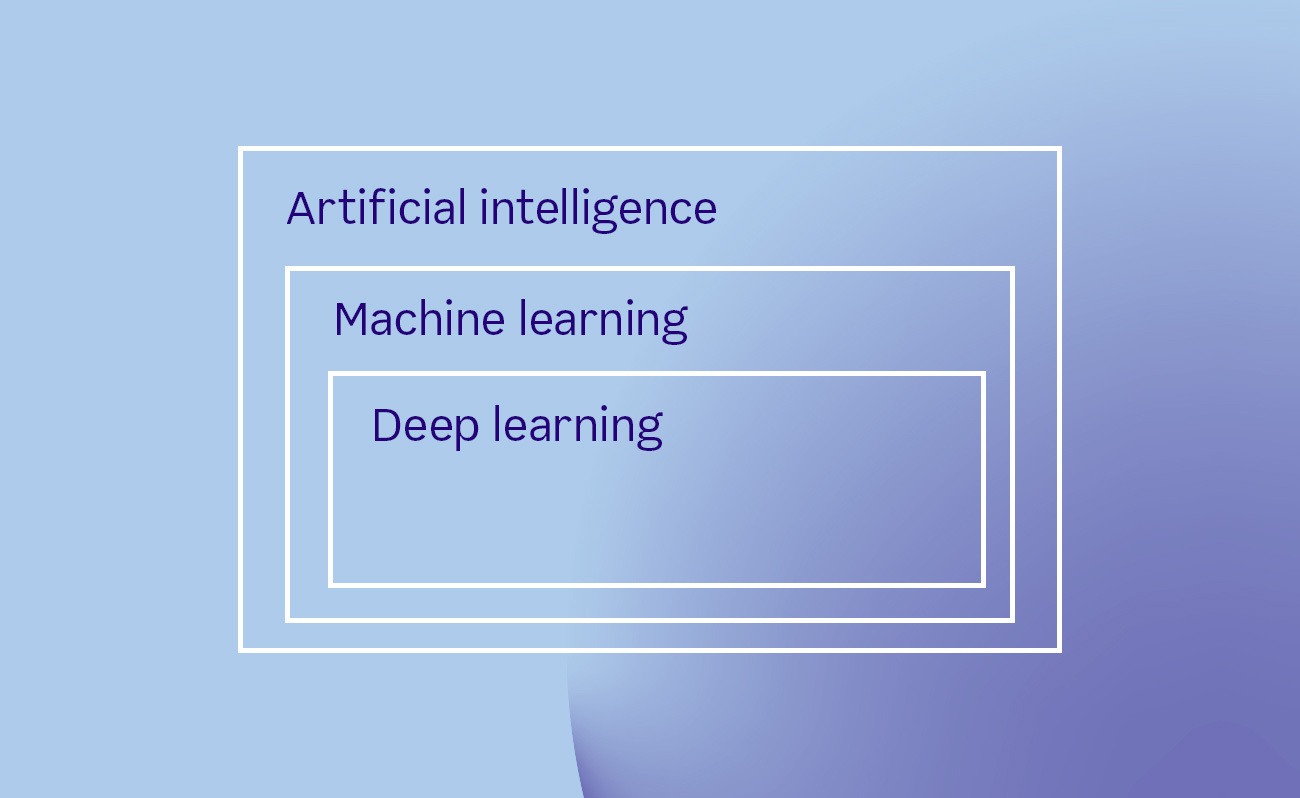

Artificial intelligence, machine learning and deep learning are terms often used when describing latest and most exciting software. But what do these terms mean?

Artificial intelligence (AI) researchers dream of creating machines that can perform any intellectual task that human beings can. As soon as the first digital computers were built, people started to wonder if they could replicate human reasoning capabilities. Scholars have worked hard on this dream ever since.

No one has, however, yet managed to develop a human-level intelligence in a machine and, despite what you sometimes read on over-enthusiastic news articles, we are not even close to that. Human-level AI, or artificial general intelligence (AGI), remains a far-fetching research agenda. The research effort hasn't been completely fruitless, though. While the full AGI has turned out to be an elusive goal, researchers have produced many practical advances by focusing on subproblems of intelligence. This has delivered us functional speech and face recognition capabilities among other things. Most progress today is made on these kind of applied AI tasks that focus on one narrow problem at a time.

The definition of what is considered AI shifts over time. There is an old joke that as soon as somebody manages to implement an AI capability, people stop calling it AI. Thirty years ago an application that gives turn-by-turn navigation instructions to any address would most definitely have been considered AI. Now, it's just an app on your phone.

Some things that at first seem intelligent to a casual observer may not be AI. A famous example is the 1960's ELIZA chatbot posing as a psychotherapist. It used simple pattern matching rules for responding to user input. Despite its simplicity, it managed to convince many that there was more to it than there really was. As a more modern example, humanoid robots might seem life-like but may still be controlled by a simple script.

Often, AI is just marketing speak. Whenever you hear somebody hyping up how their app has AI, they very likely should actually be talking about machine learning.

Machine learning provides tools

Machine learning is a toolkit for implementing recommendations, predictions and other exciting application features. Machine learning is a collection of statistical methods for finding solutions to problems from data. The goal of machine learning is to get computers to do things by presenting them examples of what they should accomplish. This is different from the usual software development where human engineers have to figure out the solution by themselves. Machine learning is a good approach in cases that are too complicated (what is the winning strategy in chess?) or too big (how to make personalized book recommendations for every Amazon customer?) for humans to solve directly.

Machine learning is not an automatic problem solver, though. Humans are still needed in the loop. Machine learning can only solve tasks that are described in precise mathematical terms. Humans also have to collect representative training data and validate the results.

You can read more about use cases for machine learning from an earlier blog post by my colleague.

Deep learning for complex problems

Deep learning is one particular family of machine learning methods. Deep learning is currently popular and responsible for many recent advances on image and speech recognition.

In traditional (that is, non-deep) machine learning, a human data scientist has to spend considerable effort on figuring out how the raw data should be represented so that a machine can learn efficiently. Deep learning is able to mostly overcome this costly step by automatically constructing suitable data representations. The "deep" in the name refers to the fact that the learned representations are hierarchical. This makes deep learning a good match for many complicated real world problems, because those often have a hierarchical structure (for example, speech is a thought expressed as words that consist of phonemes that consist of digitized sound samples).

Machine learning methods tend to perform the better the more data is available for learning. But there is an upper limit: at some point the performance saturates and stops improving with more data. Deep learning scales up much further than other types of machine learning. Therefore, deep learning is the method of choice when dealing with extremely big datasets. The price to pay is that the required computational power can be huge.

Other approaches to AI

During the past, there has been numerous other propositions besides machine learning for confronting AI problems. Expert systems strive to encode human intelligence as inference rules and knowledge databases. They have been used, for example, in diagnosing system malfunctions. Some tasks can be solved by applying logic, search and raw computation. Deep Blue, the computer that beat human chess champions in 1990's, was essentially using a brute-force search through the game tree. There has been many attempts on building biologically-inspired architectures that aim to directly mimic how human brain works but none has yet took off.

These alternative approaches have enjoyed success only on limited application domains or have fallen out of favor because deep learning and other machine learning based approaches have turned out to be more effective.

Antti AjankiLead Data Scientist

Antti AjankiLead Data Scientist