Part 1 — The Agentic Enterprise is here: Navigating the AI tool explosion

Most enterprise AI conversations still start in the wrong place: model selection. The real question is bigger: how do we turn AI into secure, reliable, and repeatable work inside real systems, with predictable economics? That’s the shift from “answers” to “outcomes,” and it’s why agents are suddenly everywhere.

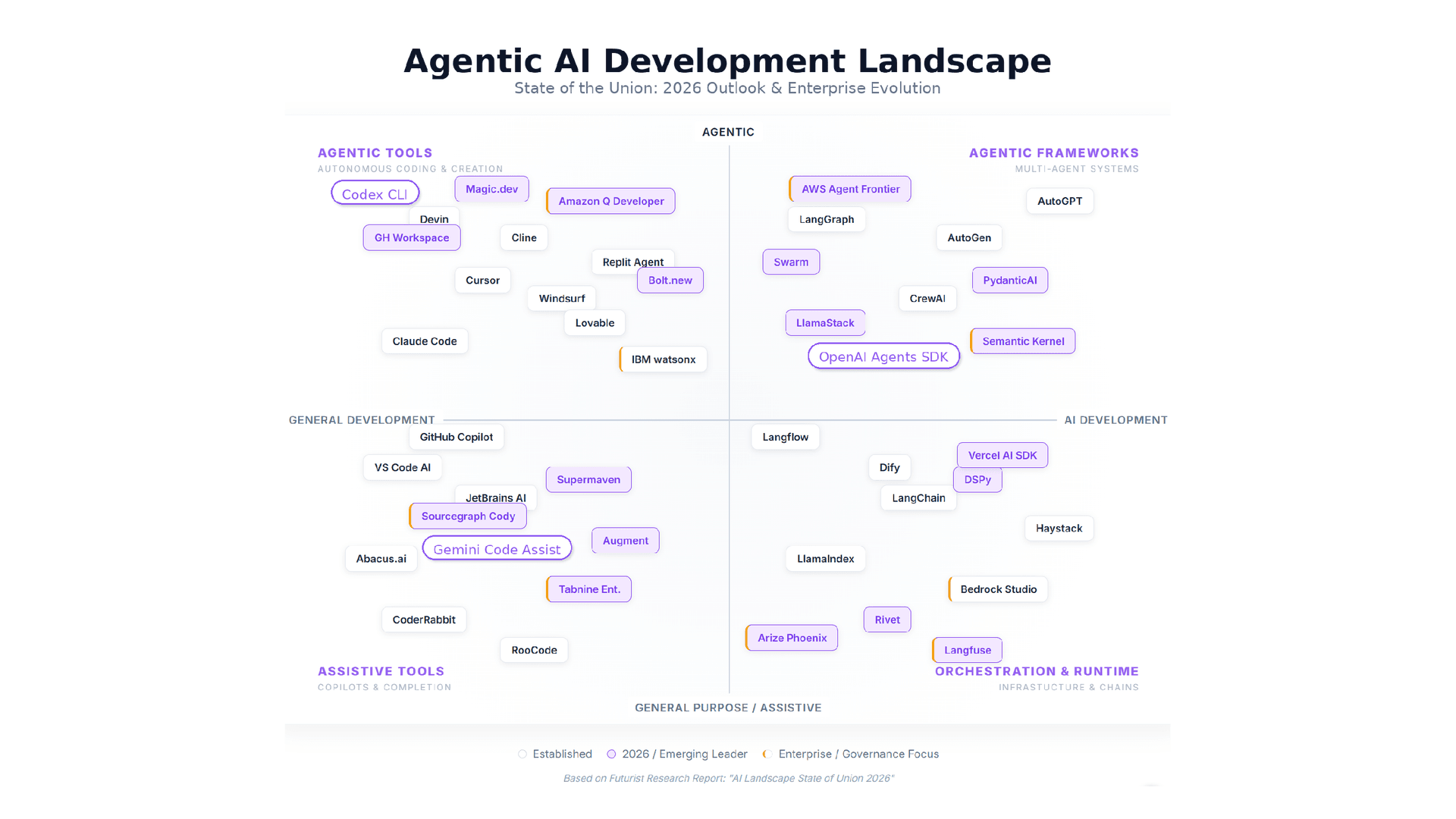

As agents spread, the ecosystem has exploded. Agent types, orchestration frameworks, agent runtimes, retrieval stacks, evaluation tools, safety layers, connectors, and copilots are embedded into every SaaS product. It can feel like chaos. It isn’t. It’s specialization and an industry rapidly discovering what’s required to run AI as part of the enterprise operating system.

This post maps the landscape and offers a practical rubric for choosing the right architecture and tools for your specific workflow without chasing hype or creating lock-in. In Part 2, we’ll look at why PoCs stall and what “enterprise-grade” actually means in architecture terms.

Executive summary (for leaders who skim)

- Agentic AI shifts value from text generation to multi-step work completion: plan → act → verify → iterate.

- Tool proliferation is the market responding to diverse enterprise constraints (risk, integration surface, latency, compliance).

- The moment an agent can write to systems of record, governance and operations become mandatory, not optional.

- Pick tooling from the use case backwards: classify workflow type, risk tier, and non-functional requirements before choosing frameworks or vendors.

1) The shift: From answers to outcomes

The first wave of GenAI in enterprises delivered copilots: summarization, drafting, and chat-based Q&A—valuable capabilities but still limited in scope. Agentic AI changes the center of gravity. Instead of stopping at a response, agents can decompose goals, call tools, verify outputs, and complete tasks. This shift is also why non-functional requirements (security, reliability, cost, compliance) are becoming the differentiator rather than model benchmarks.

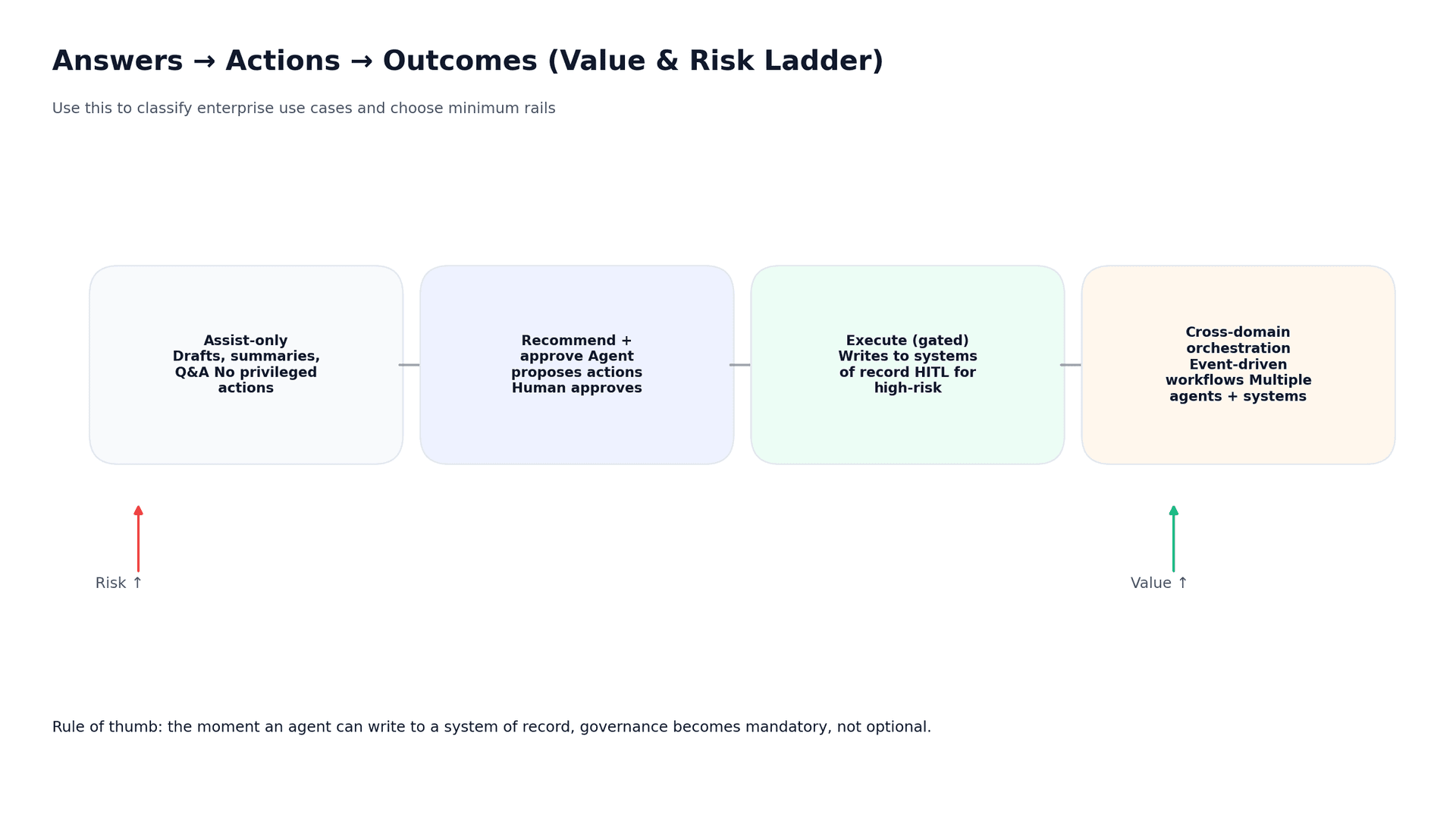

A useful way to anchor the conversation is the “answers → actions → outcomes” ladder (Figure 1). If your use case is assist-only, you can move fast in a safe sandbox. If it moves into recommending actions that humans approve, you need robust audit trails and explicit decision boundaries. And once the system can execute actions—or orchestrate cross-domain workflows—you need enterprise rails: the shared, reusable production guardrails that make agents safe and operable inside a real company. Concretely, enterprise rails include identity and non-human identities (NHIs), least-privilege access, safety scanning and policy enforcement (e.g., prompt-injection/PII controls), end-to-end observability (traces, logs, metrics), and cost controls (budgets, routing, caps) so the agent behaves predictably at scale.

2) Why the ecosystem is exploding (and why it will continue)

If all enterprises had the same workflows and risk profiles, the market would consolidate to a single runtime. Instead, enterprise workflows differ by regulation, data sensitivity, integration surface area, latency, budgets, and failure tolerance. So the ecosystem specializes: managed runtimes optimize operations and governance; frameworks optimize flexibility and portability; orchestration and evaluation tools fill the gap between demos and production systems.

Because agent architectures must match wildly different enterprise tasks (e.g., research vs autonomous coding) and their security profiles, no single framework can cover everything, so the tool ecosystem, especially coding agents, will keep growing beyond 2026 (see Figure 2).

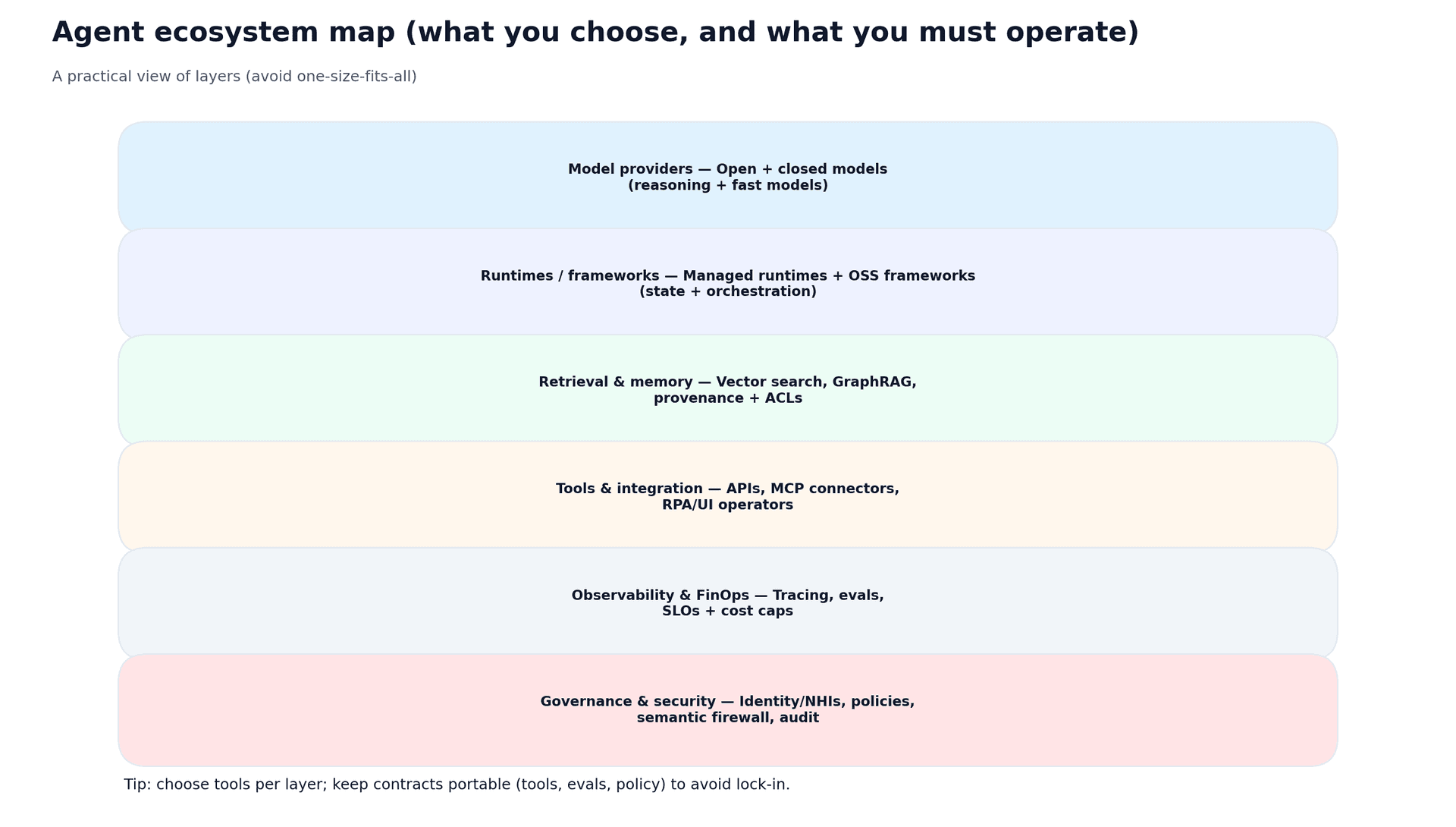

The useful mental model: choose per layer, not per trend

- Stop asking “which framework is best?” Ask “which layer do we need to own vs outsource?”

- Keep portability in the contracts: tool interfaces, evaluation harnesses, and policy gates.

- Treat observability and FinOps as first-class features, not afterthoughts.

3) Agent archetypes: Why architecture differs by use case

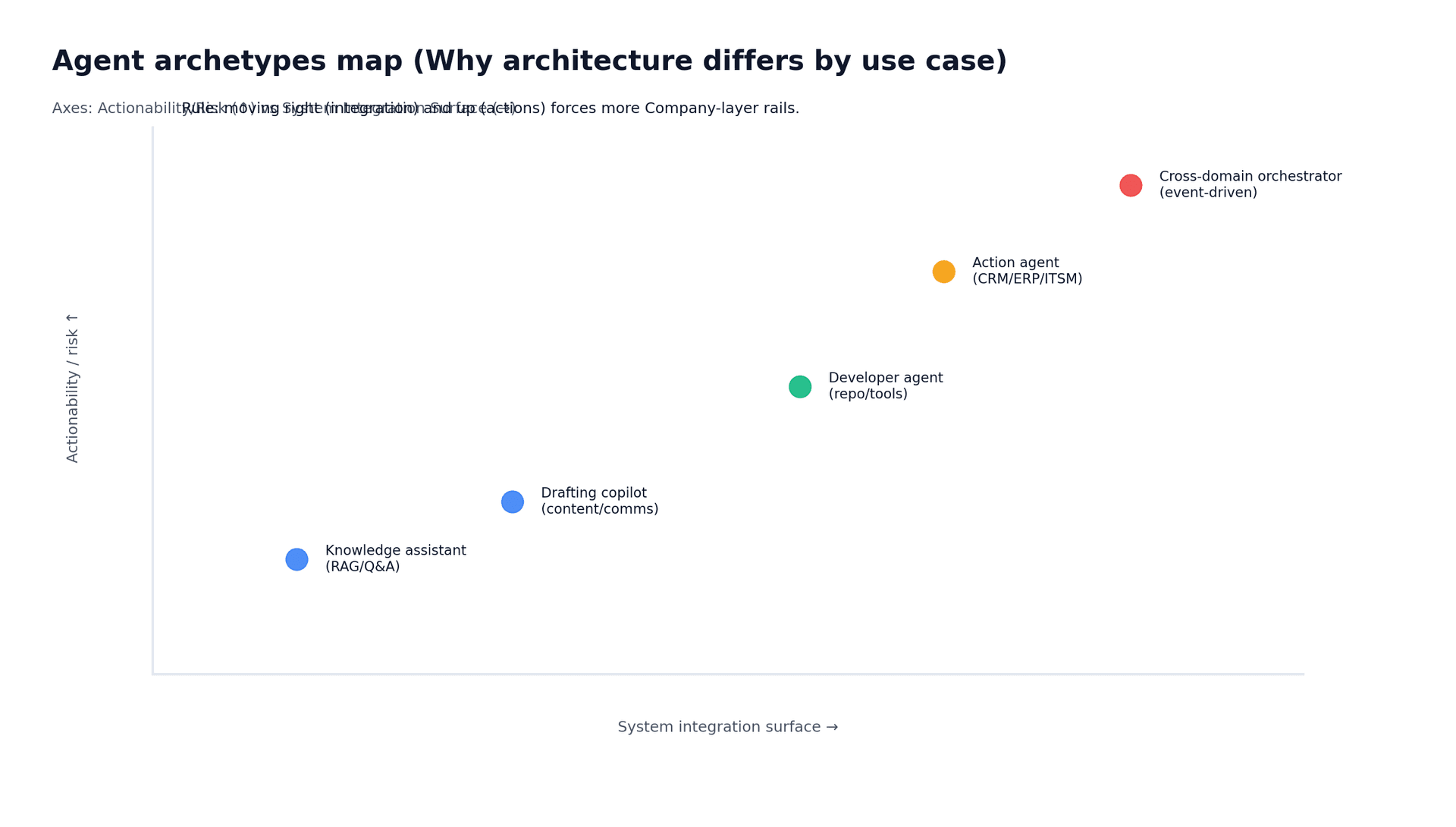

Not all agents are built the same. A knowledge assistant operating on internal documentation has different risks and architecture needs than an ITSM remediation agent that can change production systems.

As you move right (more integrations) and up (more actionability), your architecture must shift. Read-heavy agents demand retrieval quality, provenance, and access control. Action agents demand least privilege, approvals, idempotency, and audit-grade logs. Cross-domain orchestrators demand an event backbone, coordination patterns, and portfolio-level governance.

4) The agent stack: What you choose vs what you must operate

A common failure pattern is “we picked a model and built a prototype” without designing the rest of the stack. Production agents require layers: retrieval and memory, tool integration, observability, safety, and cost controls. The goal is to make the safe path the default path.

5) Cost, latency, and reliability: Why routing becomes inevitable

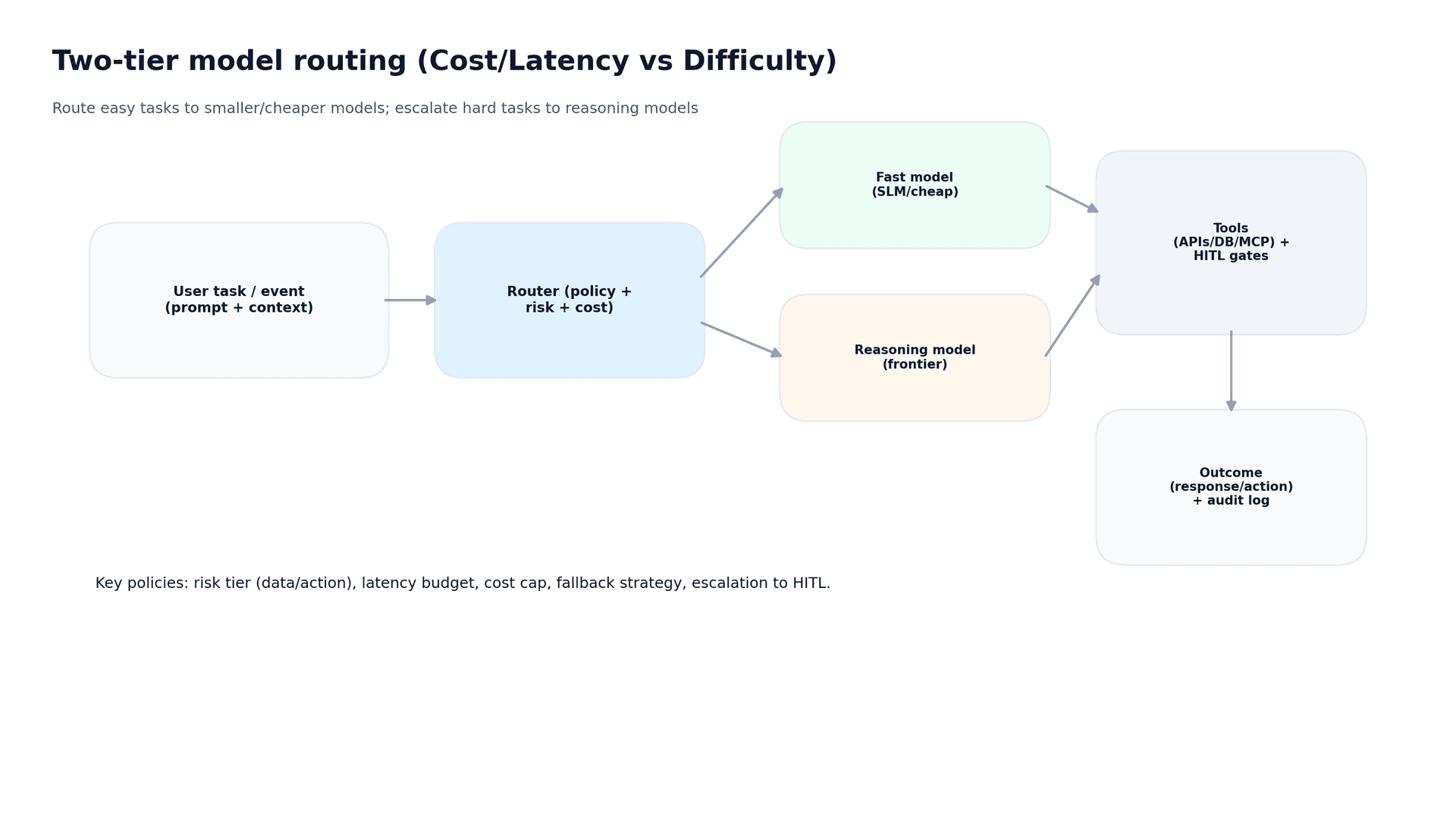

As usage grows, cost and latency stop being theoretical and become real. Enterprises converge on routing: using fast/cheap models for easy tasks, escalating to reasoning models for hard tasks, and applying human approval gates for high-risk actions. This is how you manage unit economics without sacrificing quality. 4

6) A pragmatic selection rubric (use-case driven)

Before you pick tools, answer these questions in order:

- Outcome: What business metric changes if this works (cycle time, cost, risk, conversion)?

- Workflow type: Does the agent read, recommend, or execute?

- Risk tier: What data does it touch, and what actions can it take?

- Non-functionals: What are the SLOs/SLA, audit requirements, residency constraints, and cost caps?

- Integration surface: Which systems of record/engagement does it need (CRM/ERP/ITSM/Git), and is it event-driven?

- Reuse strategy: What should become a reusable capability for the next 10 use cases (connectors, policies, evals, observability)?

Avoid these three traps

- The “Everything Bot” trap: broad scope with weak controls. Start with 1–2 narrow action agents tied to cash-flow outcomes.

- The “Platform with no pull” trap: building a landing zone before you have a use case that creates adoption pressure.

- The "Messy Foundation" trap: Building clean AI on unvetted "Shadow AI" tools or messy data. To prevent this, Futurice uses a "Mess-O-Meter" to assess technical debt. If critical, we pause AI development to build a governed Lean Data Product first, ensuring agents run exclusively on pristine intelligence

What to do next

If you remember one thing: pick architecture from the use case backwards. Classify a small set of high-value workflows using the ladder in Figure 1, and define your minimum rails before selecting a framework.

In Part 2 of this series, we will dive into the friction that occurs when these probabilistic AI agents meet deterministic enterprise constraints, exploring security, architecture, and Service Level Agreements (SLAs).

Adamu HarunaTech Principal

Adamu HarunaTech Principal