The Automation Paradox - Why legacy governance is the new technical debt

The mandate for banking leaders in 2026 is clear: Automate to survive. Scale financial crime detection. Accelerate product delivery. Move artificial intelligence from pilot to production. Demand is not the bottleneck, nor is it a shortage of ambition. The issue is the inability to execute at the pace required by the market.

Both the logic and the investment case are sound — technology budgets across UK financial services have never been higher. Yet the promised gains in speed and capacity remain stubbornly elusive.

This is the Automation Paradox. In a heavily regulated industry, automation without engineered compliance doesn't just create risk — it accelerates it. Models scale. Audit trails don't. Deployment pipelines move faster than the governance wrapped around them. And when risk outpaces an institution's ability to demonstrate control, the rational organisational response is to pull the emergency brake — triggering a structural slowdown at precisely the moment when the pressure to accelerate is greatest.

The compliance tax

Recent estimates suggest that compliance now absorbs roughly 13% of operating costs across UK financial services, with mandatory regulatory change and remediation consuming as much as 18% of IT spend.

Taken together, these figures imply that up to a third of total change capacity is diverted from innovation into what might be called a compliance tax — a persistent drain in which technology and operations resources are trapped in manual evidence generation, reactive remediation, and process rework.

Why call it a tax? Because it behaves like one — unavoidable, compounding, and paid regardless of performance. But unlike a levy from the Treasury, this one is self-inflicted. Most banking governance was built for a world of annual audits and paper trails, where proving control meant assembling a binder once a year. That world no longer exists. Regulators now expect something closer to continuous, real-time assurance. The gap between those two operating models is enormous, and it's inside that gap where capacity quietly bleeds away.

Where the tax strikes

Percentages and cost ratios are useful, but they obscure where the pain shows up. To see the compliance tax in action, look at the processes that sit closest to the client — the ones where delays are felt in real commercial terms.

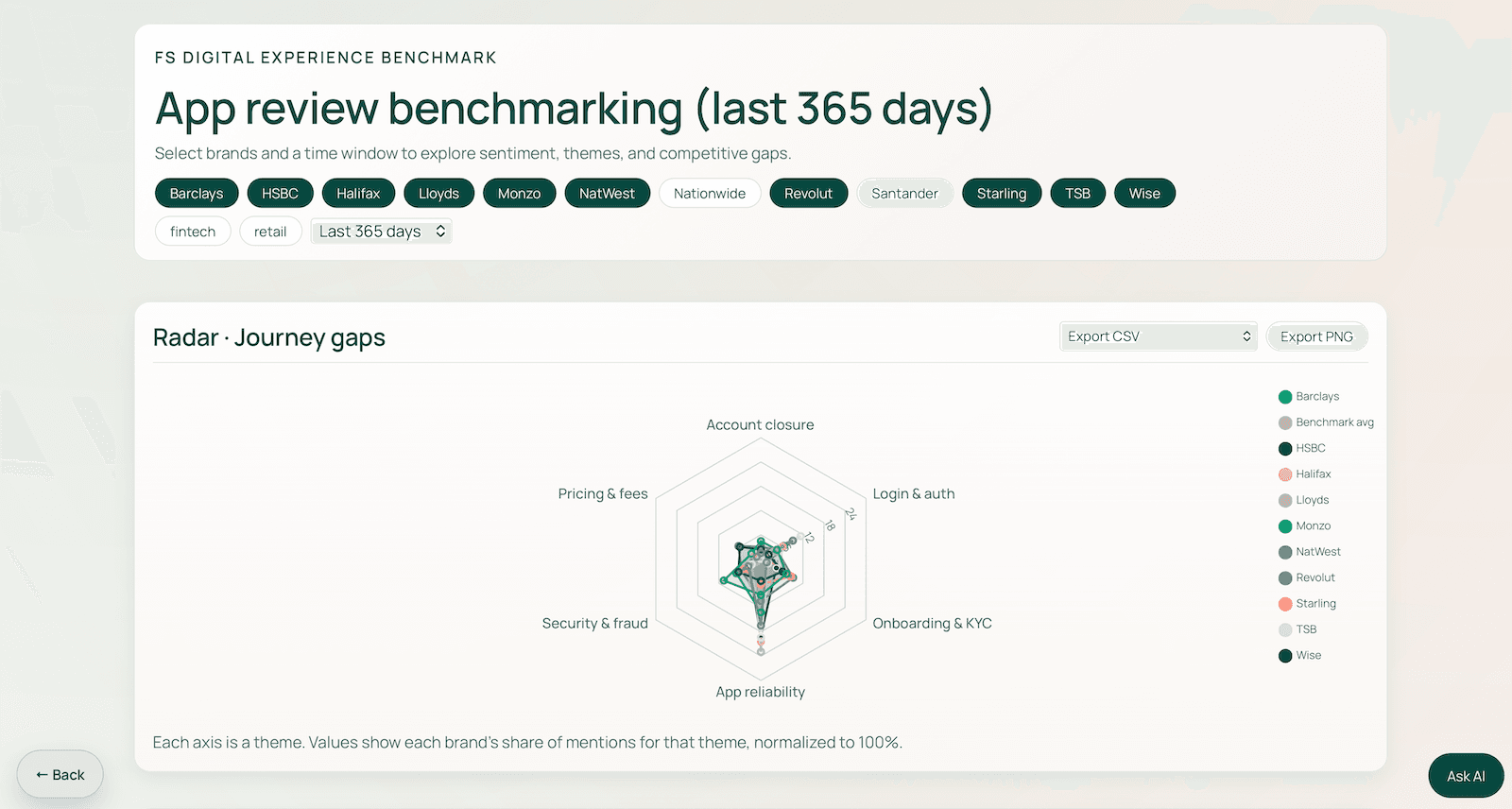

Onboarding is a great place to start. A standard retail account opens quickly enough, but try bringing on a multi-entity corporate with operations across several jurisdictions, or a fast-growing business with a risk profile that doesn't fit neatly into existing categories, and the timeline balloons. This takes weeks, or even months. The reasons are depressingly consistent: manual verification steps that can't be skipped, data sitting in systems that don't talk to each other, the same checks being run multiple times by different teams who don't realise they're duplicating work. Every additional layer of client complexity makes it worse — more time, more cost, and a client experience that deteriorates before the relationship has properly started. Tools like the CX Risk Radar show just how clearly customers signal this friction — and how quickly it compounds into competitive disadvantage.

Lifecycle management is no better. KYC reviews, periodic re-verification, ongoing due diligence — at most institutions, this remains overwhelmingly manual work. Teams spend so much capacity on maintaining existing client relationships that there's barely any left to win new ones.

Financial crime detection tells a grimly similar story, though the mechanics differ. Legacy monitoring platforms throw up far more alerts than any human team can realistically work through, and the resulting backlog becomes a permanent fixture — not a temporary problem to be solved but a structural feature of the operating model. At root, this is a data problem. Rules-based engines pulling from fragmented, siloed information simply can't produce the kind of contextual intelligence that modern risk detection demands. Automation layered onto that broken foundation doesn't buy you efficiency. It buys you more noise.

In software delivery, the friction between modern engineering velocity and manual governance is stark. When compliance operates as a gate at the end of a release cycle rather than being embedded throughout it, every deployment slows — regardless of how agile the engineering team may be. Manual sign-offs are incompatible with the ephemeral nature of cloud-native infrastructure. The evidence they produce is often outdated by the time it is reviewed.

In artificial intelligence, the constraint is not capability but control. Banks have invested heavily, yet most initiatives struggle to move beyond the pilot stage. If models scale faster than the audit trail beneath them, explainability gaps become the next remediation cycle before the current one is resolved. The issue isn’t that institutions lack ambition for AI, but that they lack the architecture to govern it at scale.

Across all these domains, the underlying pattern is identical. Governance is manual, reactive, and bolted on after the fact.

From reporting to engineering

Blaming the regulators is tempting — and common. But it misses the point. Regulatory pressure hasn't fundamentally changed in character; what varies, bank by bank, is how well that pressure gets absorbed. Some institutions buckle under it while others barely notice. The difference between them isn't in the regulatory burden, it's in the architecture.

Legacy governance, seen this way, has become a particularly insidious form of technical debt. It won't appear on any balance sheet, but its drag on delivery is measurable in missed launches, stalled programmes, and quarters spent fixing problems that better design would have prevented. Resolving this means treating compliance as something to be engineered — woven into how systems actually work — rather than something to be reported on after the fact. Thicker policy documents and better-formatted spreadsheets won't get the job done.

What actually works tends to follow a pragmatic pattern, starting with resisting the instinct to rip out and replace. Full platform overhauls are a recipe for multi-year programmes that rarely deliver on their original promise. The more practical move is to layer modern governance on top of what already exists — wrapping legacy systems in a new layer that intercepts data flows and automates evidence generation, without demanding that the old plumbing change overnight. In software engineering, this is known as the strangle pattern. In compliance terms, it means you stop waiting for the perfect foundation and start building control into what's actually running today.

From there, two things tend to move the needle most. First, treating regulatory rules as independent, machine-readable services — decoupled from the core banking platform so they can be updated when requirements change without triggering a full release cycle. Second, embedding controls directly into the delivery pipeline, so the system generates its own audit trail as code is written. The evidence-gathering sprint that typically precedes every release simply disappears. One team we work with reduced their pre-release compliance effort by more than half this way — not by doing less, but by making the process automatic rather than manual.

Regulatory rules, treated as independent, machine-readable services, can be updated to meet new requirements without triggering a full release cycle on the core banking platform. Where controls are embedded directly into the delivery pipeline, the system generates its own audit trail as code is written — eliminating the evidence-gathering sprint that typically precedes every release.

The real role of AI

Artificial intelligence has a particular role to play in this shift, but not in the way it is most commonly discussed.

Most of the conversation about AI in banking focuses on models — how accurate they are, what they can do, what they might eventually replace. While this is interesting, it somewhat misses the point. What actually moves the needle is orchestration: stringing together several AI-driven steps across an end-to-end process, keeping a human in the loop at the decision points that genuinely need judgement, and routing edge cases to the right specialist rather than dumping them into a generic exception queue.

A clever model dropped into one step of a broken process won't fix much. An orchestrated workflow — with compliance built into each stage — changes the economics entirely.

For example, an onboarding process redesigned along these lines would not simply replace a manual step with a model. It would orchestrate document verification, entity resolution, risk scoring, and regulatory screening as a connected workflow — surfacing only genuine exceptions and ambiguities for human judgement.

The leadership problem

There is, however, a final dimension to this shift that is more organisational than technical. Even with the architecture right and the AI humming, the gains only count if the capacity they release is actively redeployed — towards better client service, faster product development, sharper risk analysis. This is the step most often overlooked, and the one most likely to determine whether the investment actually pays off.

Without deliberate leadership action, old patterns reassert themselves. Headcount stays flat. Work expands to fill the space. The compliance tax gets reshuffled rather than reduced.

Which is why the automation paradox is, when you strip it back, as much a leadership challenge as an engineering one. The institutions that crack it will be those whose senior teams treat compliance as a capability worth designing properly — and then have the discipline to redirect the bandwidth it frees up, rather than letting it quietly disappear back into the machine.

So the paradox isn't really about technology. It's about design choices — and whether safety and speed have to work against each other, or whether they can, with the right architecture and the right leadership, reinforce one another.

Banks that grasp this in 2026 will get back the capacity to actually build things worth building. It turns out that velocity and control aren't competing priorities — they're the same engineering problem, looked at from different angles.

David MitchellChief Growth Officer

David MitchellChief Growth Officer